Table of Contents

Hadoop ETL Best Practices: What Works and What to Avoid

Hadoop ETL best practices focus on using distributed processing the way Hadoop was actually designed — not mimicking traditional database patterns. The core principles: use columnar file formats like ORC or Parquet, avoid Views in favor of managed tables, keep partition sizes at 64–128 MB minimum, build pipelines in parallel using phase development, and choose Hive over Pig for long-term maintainability. This guide walks through each of these in detail, plus what to avoid when building or migrating Hadoop ETL pipelines.

Hadoop, an open-source framework has been around for quite some time in the industry. You would see a multitude of articles on how to use Hadoop for various data transformation needs, features, and functionalities.

But do you know what are the Hadoop best practices for ETL? Remember that as this is an open-source project, there are many features available in Hadoop. But it may not be the best option to use them in new Hadoop ETL development. I have seen from experience that old database programmers use Oracle/SQL Server’s techniques in Hive and screw up the performance of the ETL logic.

So, why do these techniques exist when it is not in your best interest to use them? It is because of Hadoop’s open-source nature and competition in the industry. If a client is doing a Teradata conversion project, they can save enough dollars by just converting Teradata logic to Hive, and for performance gain, they don’t mind paying for additional hardware. This is why all the features of all the traditional databases exist in Hive.

Hadoop ETL vs Traditional ETL: What's Different

Traditional ETL tools were built around centralized, batch-oriented processing. Data gets extracted from a source, transformed in a staging area, and then loaded into a data warehouse. This works well when data volumes are manageable and the source systems are mostly relational.

Hadoop changes the equation. Because it's built on distributed storage and parallel compute, it can handle petabytes of structured, semi-structured, and unstructured data that traditional ETL tools simply can't process at scale. The transformation logic runs across a cluster rather than on a single server, which is why patterns that work in Oracle or SQL Server often hurt performance when ported directly to Hive.

There's also an important distinction between ETL and ELT in the Hadoop context. With ELT (Extract, Load, Transform), raw data is loaded directly into HDFS first, and transformation happens later on demand. This suits Hadoop's architecture well because the distributed compute layer handles transformation more efficiently than a staging server would. Many modern Hadoop pipelines use ELT rather than traditional ETL for this reason.

|

|

Traditional ETL |

Hadoop ETL/ELT |

|

Processing model |

Centralized, sequential |

Distributed, parallel |

|

Data types handled |

Primarily relational |

Structured, semi-structured, unstructured |

|

Transformation location |

Staging server |

In-cluster (Hive, Spark, MapReduce) |

|

Scalability |

Vertical (bigger server) |

Horizontal (more nodes) |

|

Best for |

Moderate volumes, relational data |

Petabyte-scale, diverse data sources |

Hadoop ETL Best Practices: Dos and Don'ts

If you are writing a new logic in Hadoop, use the proposed methodology for Hadoop development.

Core Principles to Understand Before You Write a Single Line

1. Storage is cheap, processing is expensive: Design your pipelines to minimize compute, not storage. Materializing intermediate results into tables is almost always worth it.

2. Jobs take seconds to minutes, not milliseconds: This isn't a transactional system. Design your workflows around batch efficiency, not response time.

3. Source data doesn't change until you import it again: This is why Views built for transactional systems make no sense here. The data is static between runs, so pre-materialized tables are always the right call.

Do not use Views

Views are great for transactional systems, where data is frequently changing, and a programmer can consolidate sophisticated logic into a View. When source data is not changing, create a table instead of a View.

Do not use small partitions

In a transactional system, to reduce the query time, a simple approach is to partition the data based on a query where clause. While in Hadoop, the mapping is far cheaper than start and stop of a container. So use partition only when the data size of each partition is about a block size or more (64/128 MB).

A good rule of thumb: if your partition data size is smaller than one HDFS block (64 or 128 MB), you're over-partitioning. The overhead of container start and stop will cost you more than the partition saves.

Use ORC or Parquet File format

By switching from text-based formats to ORC or Parquet, you get columnar storage, built-in compression, and predicate pushdown — meaning queries only read the columns they need instead of scanning full rows. In practice, this translates to significant reductions in both I/O and storage costs. ORC tends to perform better with Hive workloads; Parquet is often preferred when you're also using Spark downstream.

Avoid Sequential Programming (Phase Development)

We need to find ways, where we can program in parallel and use Hadoop’s processing power. The core of this is breaking logic into multiple phases, and we should be able to run these steps in parallel.

Think of each phase as an independent unit that can be parallelized. If Phase B doesn't depend on Phase C, they should run at the same time, not in sequence.

Managed Table vs. External Table

Adopt a standard and stick to it but I recommend using Managed Tables. They are always better to govern. You should use External Tables when you are importing data from the external system. You need to define a schema for it after that entire workflow can be created using Hive scripts leveraging managed tables.

Pig vs. Hive

If you ask any Hadoop vendor (Cloudera, Hortonworks, etc.), you will not get a definitive answer as they support both languages. Sometimes, logic in Hive can be quite complicated compared to Pig but I would still advise using Hive if possible. This is because we would need resources in the future to maintain the code.

There are very few people who know Pig, and it is a steep learning curve. And also, there is not much investment happening in Pig as compared to Hive. Various industries are using Hive and companies like Cloudera, AWS, and Microsoft continue to invest heavily in Hive, while Pig has seen significantly less development activity. Unless you're maintaining existing Pig code, Hive is the clear choice for new development.

How Phase Development Works in Practice

So how would you get the right outcome without using Views? You would get that by using Phase Development. Keep parking processed data into various phases and then keep treating it to obtain a final result.

Tools for Hadoop ETL Pipelines

Choosing the right tools for each stage of your pipeline matters as much as the logic itself. Here's how the most common ones fit together:

Ingestion: Apache Sqoop handles bulk data transfer from relational databases into HDFS. For streaming or event-driven ingestion, Apache Kafka or Apache Flume are better suited to low-latency data arrival.

Transformation: Apache Hive handles SQL-based transformations well for most batch workloads. For more complex or performance-intensive jobs, Apache Spark has largely replaced MapReduce as the transformation engine of choice — it's faster in-memory and more flexible for iterative processing.

Orchestration: Apache Oozie is the traditional workflow scheduler for Hadoop. Apache Airflow has become increasingly popular in modern data engineering stacks for its flexibility and better UI.

File formats: ORC for Hive-heavy workloads. Parquet when your pipeline touches Spark or needs cross-platform compatibility.

Testing your pipelines — checking data accuracy, completeness, and behavior under large loads — should not be an afterthought. Validate transformations using sampling on representative data subsets before pushing to production.

How Hadoop ETL Has Evolved: What's Changed Since Batch-Only Pipelines

The original Hadoop ETL model was pure batch processing. You'd run large jobs overnight, and results would be ready in the morning. That model still exists, but it's no longer the full picture.

Real-time and hybrid pipelines are now common. Tools like Apache Kafka handle continuous data ingestion, while Apache Flink or Spark Streaming allow transformations to happen as data arrives rather than in scheduled batches. Organizations in finance, retail, and telecom have been early adopters of this model because they need near-real-time insights to act on.

Cloud-managed Hadoop environments have also changed how teams think about infrastructure. Services like Amazon EMR, Google Dataproc, and Azure HDInsight let you run Hadoop workloads without managing physical clusters. You get on-demand compute scaling and tighter integration with cloud storage and BI tools, which reduces operational overhead significantly.

Finally, data governance is no longer separate from pipeline design. Teams are increasingly integrating data catalogs, lineage tracking, and metadata tools directly into their Hadoop workflows. This gives you visibility into where data came from, how it was transformed, and whether it meets compliance requirements — all of which matter when your pipelines are running at scale.

Hadoop ETL Architecture Best Practices

When building a Hadoop ETL pipeline, the cleanest architectural pattern separates extraction, transformation, and load into modular, independently testable components.

Extract: Pull from source systems using connectors designed for distributed environments. Sqoop works well for relational database sources. Kafka handles streaming and event-driven sources. Avoid writing custom extraction scripts unless there's no alternative — they're harder to maintain and scale.

Transform: Match your compute engine to your workload. Hive SQL works well for structured transformations on stable schemas. Spark is better for complex logic, iterative processing, or when you need faster execution times. MapReduce is still valid for simple, highly parallelizable jobs but is rarely the first choice in modern pipeline design.

Load: Write transformed data into target tables or data lake storage in your chosen format (ORC or Parquet). Partition output by the fields most commonly used in downstream queries — typically date, region, or category. Avoid over-partitioning as noted above.

Keep each stage independently deployable. If your transformation logic changes, you shouldn't need to touch the extraction or load components.

Conclusion

The most common mistake in Hadoop ETL development is treating it like a traditional relational database with more hardware. It isn't. The architecture rewards parallel thinking, distributed design, and the right file formats — and punishes patterns copied from Oracle or SQL Server.

If you're building or refactoring Hadoop ETL pipelines, start with the fundamentals: managed tables over views, ORC or Parquet over text, phase development over sequential logic, and Hive over Pig for anything you'll need to maintain long-term. Layer in Spark for performance-intensive transforms, and use Sqoop or Kafka for ingestion depending on whether your sources are batch or streaming.

For teams managing Hadoop pipelines at scale, data governance doesn't end at the pipeline level. Tracking lineage, enforcing access policies, and maintaining a catalog of what's in your data lake becomes critical as volume and complexity grow. OvalEdge gives your team a unified view of metadata, data lineage, and access governance — so you know exactly what's flowing through your pipelines, where it came from, and whether it's compliant. Schedule a personalized demo to see how it fits your data environment.

FAQ’s

1. What is Hadoop ETL and why is it important?

Hadoop ETL is the process of extracting data from source systems, transforming it using distributed compute engines like Hive or Spark, and loading it into HDFS or downstream analytics platforms. It's important because traditional ETL tools can't efficiently process the volume, variety, and velocity of data that modern organizations generate. Hadoop handles petabyte-scale workloads that would overwhelm centralized systems.

2. What are the most important ETL best practices in Hadoop?

The key practices are: use ORC or Parquet instead of text-based file formats, avoid Views in favor of managed tables, keep partition sizes at 64–128 MB minimum to avoid container overhead, design pipelines using phase development to maximize parallelism, and choose Hive over Pig for maintainability. For ingestion, use Sqoop for relational sources and Kafka for streaming data.

3. What is the difference between ETL and ELT on Hadoop?

In ETL, data is transformed before it's loaded into the target system. In ELT, raw data is loaded into HDFS first, then transformed on demand using the cluster's compute power. ELT often suits Hadoop better because the distributed architecture handles large-scale transformation more efficiently than a separate staging server.

4. Which tools are used for Hadoop ETL pipelines?

Common tools include Apache Hive for SQL-based transformation, Apache Spark for performance-intensive or complex transforms, Apache Sqoop for ingesting data from relational databases, Apache Kafka for streaming ingestion, and Apache Oozie or Airflow for workflow orchestration. ORC and Parquet are the standard file formats for performant storage.

5. Is Hadoop ETL still relevant in 2025?

Yes, particularly for organizations managing large-scale on-premise data workloads or hybrid architectures. Many teams now combine Hadoop with Spark for faster in-memory processing and cloud-managed environments like Amazon EMR or Azure HDInsight for elastic compute. The core principles of distributed ETL design — parallelism, columnar formats, and modular pipelines — remain valid regardless of the underlying infrastructure.

Deep-dive whitepapers on modern data governance and agentic analytics

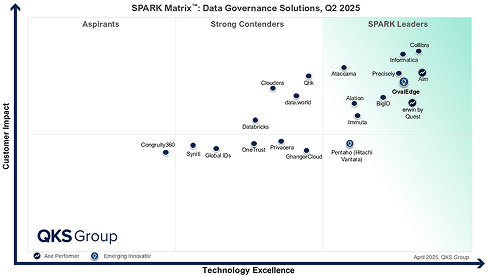

OvalEdge Recognized as a Leader in Data Governance Solutions

3ff1.png)

“Reference customers have repeatedly mentioned the great customer service they receive along with the support for their custom requirements, facilitating time to value. OvalEdge fits well with organizations prioritizing business user empowerment within their data governance strategy.”

aef2.png)

“Reference customers have repeatedly mentioned the great customer service they receive along with the support for their custom requirements, facilitating time to value. OvalEdge fits well with organizations prioritizing business user empowerment within their data governance strategy.”

Gartner, Magic Quadrant for Data and Analytics Governance Platforms, January 2025

Gartner does not endorse any vendor, product or service depicted in its research publications, and does not advise technology users to select only those vendors with the highest ratings or other designation. Gartner research publications consist of the opinions of Gartner’s research organization and should not be construed as statements of fact. Gartner disclaims all warranties, expressed or implied, with respect to this research, including any warranties of merchantability or fitness for a particular purpose.

GARTNER and MAGIC QUADRANT are registered trademarks of Gartner, Inc. and/or its affiliates in the U.S. and internationally and are used herein with permission. All rights reserved.